Schema validation

Why run schema validation?

How do you know that your Kubernetes manifests are syntactically valid? Are you sure you don’t have any invalid data types? Are any mandatory fields missing? Most often, we only become aware of these misconfigurations at the worst time - when trying to deploy it.

How does it work?

There is no need to set up anything because every Kubernetes manifest that Datree scanned is also validated before the rules from the Centralized policy are executed.

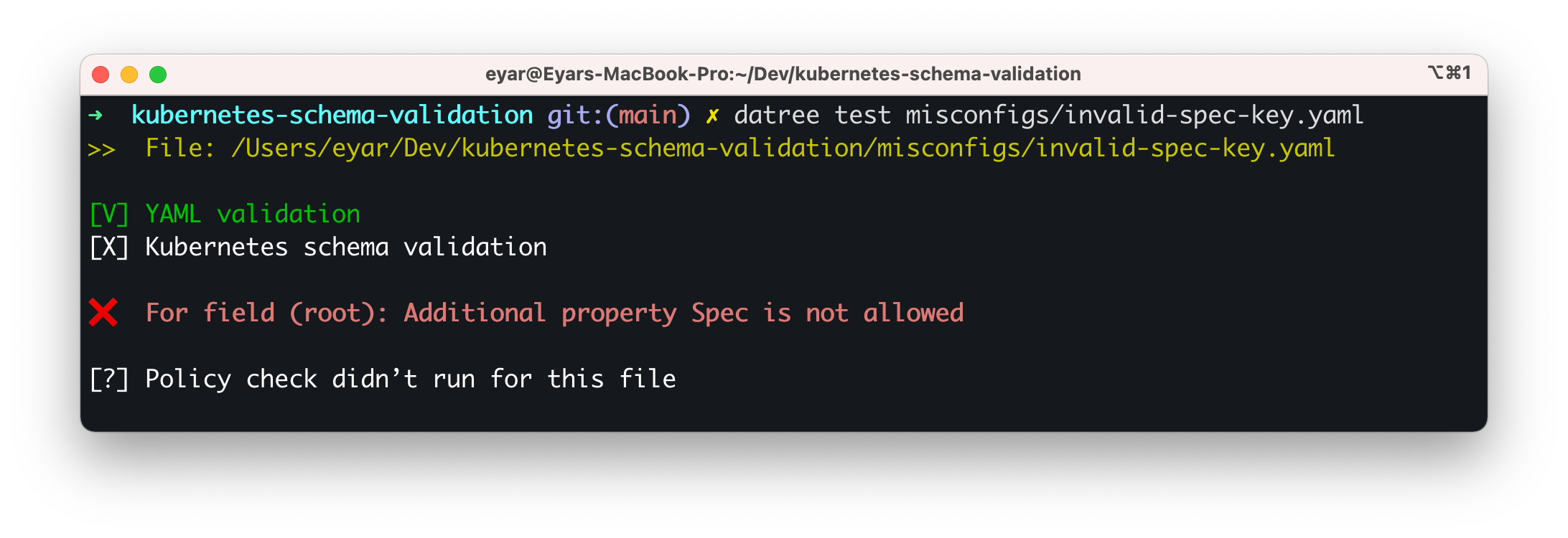

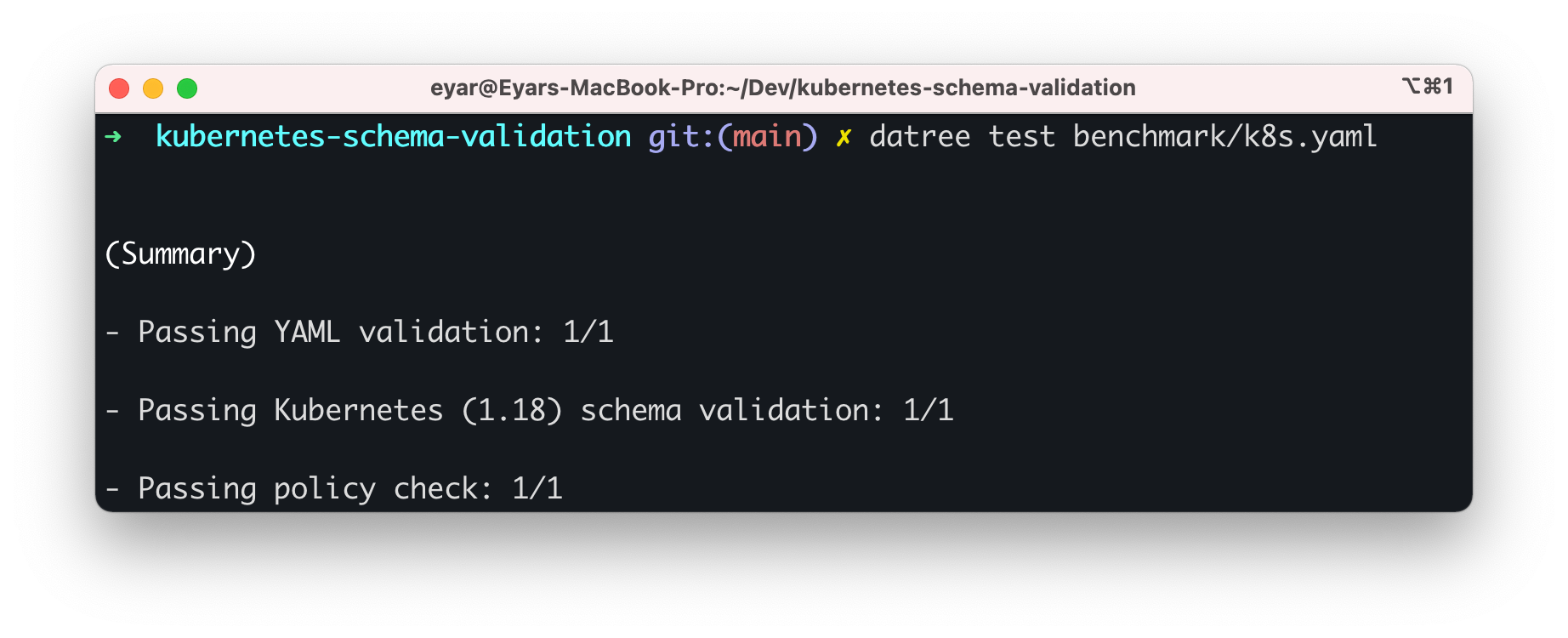

If the manifest schema is invalid, the policy check will not be calculated. This way, you can ensure that all the files that are scanned by Datree are also valid Kubernetes files. Under the hood, we incorporated kubeconform with Datree to gain the schema validation capability.

| [X] Failing schema validation | [V] Passing schema validation |

|---|---|

|  |

Changing the (default) schema version

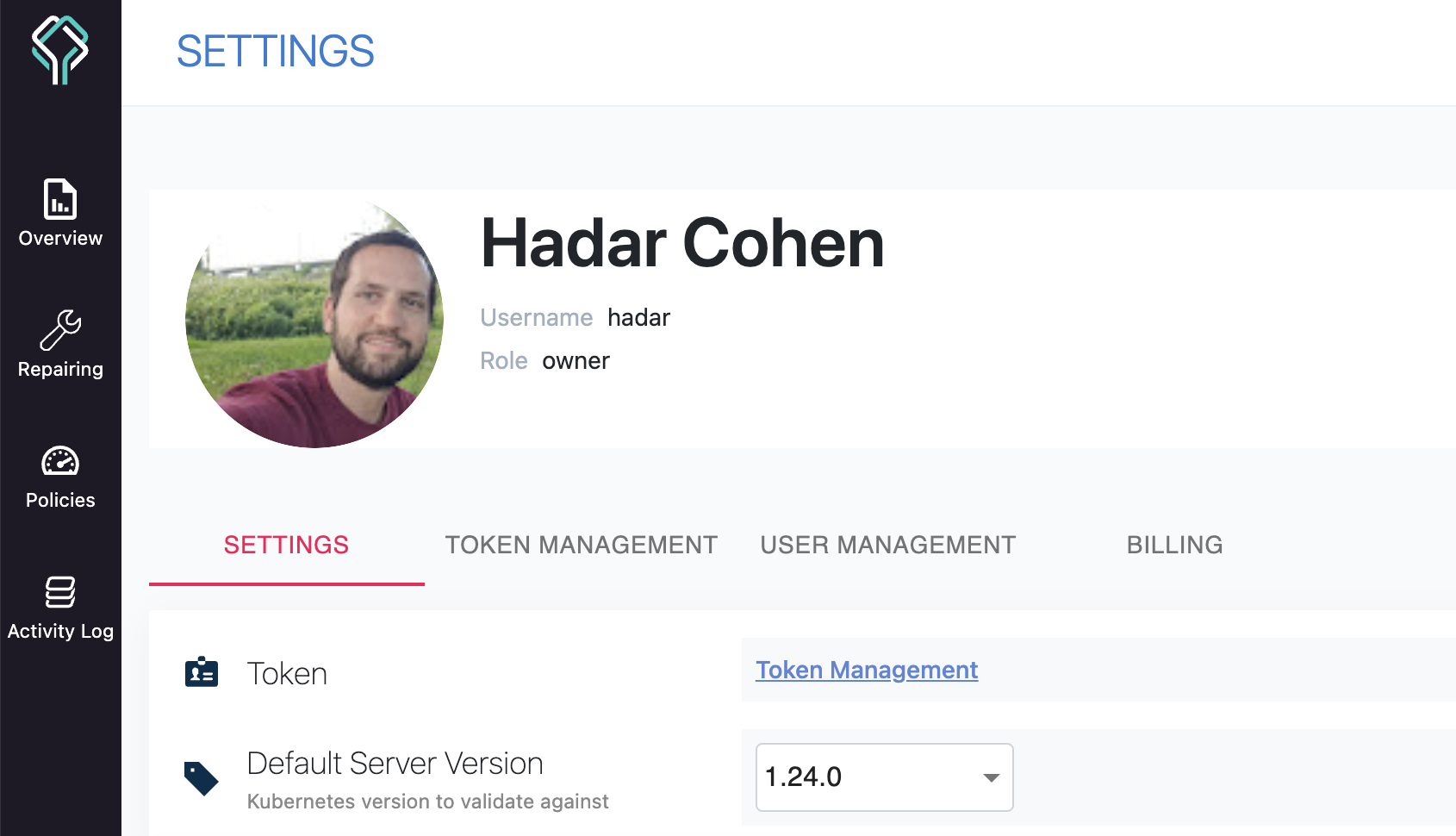

To achieve optimal coverage, the version of the schema that is validated should be the same as your Kubernetes cluster. By default, the Kubernetes schema version is 1.24.0.

Globally

If you want to change the default version to match your Kubernetes cluster version, you can do it from the SETTINGS page:

Locally

This functionality is useful if you want to migrate to a new Kubernetes version. This way, you can set the version and start the process of evaluating which configurations must be changed to support the cluster upgrade.

Passing a different schema version to the CLI will override the global schema version that is passed from the SETTINGS page.

The CLI accepts the flag --schema-version, -s with a version number (as string):

$ datree test --schema-version "1.25.0" ~/.datree/k8s-demo.yaml

CRD support

When the --schema-location flag is not set, the CLI will automatically look for schemas in the following locations:

- kubernetes-json-schema - a repository that contains schemas of native kubernetes objects.

- CRDs-catalog - a repository that aggregates over 100 popular CRDs.

As a result, Datree supports a large variety of CRDs out-of-the-box.

Using CRDs that are not listed in the catalog?

The CLI supports passing one, or multiple, schemas locations - HTTP(S) URLs, or local filesystem paths - in which case it will look for schema definitions in each of them, in order, stopping as soon as a matching file is found.

If the

--schema-locationvalue does not end with `.json`, the CLI will assume the file name structure is identical to kubernetes-json-schema.If the

--schema-locationvalue ends with `.json` - the CLI will also accept a file name structure with Go templated string to indicates how to search for JSON schemas.

Therefore, the following command lines are equivalent:

$ datree test fixtures/valid.yaml

$ datree test fixtures/valid.yaml --schema-location 'https://raw.githubusercontent.com/yannh/kubernetes-json-schema/master/{{.NormalizedKubernetesVersion}}-standalone{{.StrictSuffix}}/{{.ResourceKind}}{{.KindSuffix}}.json' --schema-location 'https://raw.githubusercontent.com/datreeio/CRDs-catalog/{{.Group}}/{{.ResourceKind}}_{{.ResourceAPIVersion}}.json

Go template variables you can use with --schema-location

NormalizedKubernetesVersion - Kubernetes Version, prefixed by v

StrictSuffix - "-strict" or "" depending on whether validation is running in strict mode or not

ResourceKind - Kind of the Kubernetes Resource

ResourceAPIVersion - Version of API used for the resource - "v1" in "apiVersion: monitoring.coreos.com/v1"

Group - the group name as stated in this resource's definition - "monitoring.coreos.com" in "apiVersion: monitoring.coreos.com/v1"

KindSuffix - suffix computed from apiVersion - for compatibility with Kubeval schema registries

How to extract your CRDs' schemas to validate your manifests

The CRDs-catalog repository also contains a small and simple utility (aka CRD Extractor) to pull all the CRDs from your cluster and extract their schemas in JSON format. This way, you will have full coverage when "shifting left" your CRDs schema validation.

After running the utility, simply add the --schema-location parameter followed by the location in which the CRDs reside:

datree test myCustomResource.yaml --schema-location '$HOME/.datree/crdSchemas/{{.ResourceKind}}_{{.ResourceAPIVersion}}.json'

To avoid needing to set the --schema-location argument for every policy check, you can submit public CRD schemas to the CRDs-catalog where they will be used automatically. Simply open a pull request with your CRDs in JSON schema format.